Large Language Models (LLMs) have been the talk of the town due to their various capabilities that can almost replace humans. While models from OpenAI and Google have made a significant impact, there is another contender for the throne: Anthropic.

Anthropic is an AI company that is building some amazing models to compete with popular models from major providers. Anthropic recently released Claude 3, a multimodal LLM that is getting attention in the AI market. We’ll take a closer look at how the Claude 3 model works, and its multimodal capabilities.

What is a multimodal model?

We all agree that the LLMs have limitations in terms of creating/providing accurate information, which can be tracked through different techniques. But, what if we have models that take not just natural language as input, but also images and videos to provide accurate information for users? Isn’t this a fantastic idea? Models that take more than one form of input and understand different modalities (text, image, audio, video, etc.) are known as multimodal models. Google, OpenAI and Anthropic each have their multimodal models with better capabilities already in the market.

OpenAI’s GPT-4 Vision, Google’s Gemini and Anthropic’s Claude 3 series are some notable examples of multimodal models that are revolutionizing the AI industry.

Claude 3

Anthropic's Claude 3 is a family of three AI models: Claude 3 Opus, Sonnet and Haiku. These models have the multimodal capabilities that closely compete with GPT-4 and Gemini-ultra. These Claude 3 models have the right balance of cost, safety and performance.

The image displays a comparison chart of various LLMs' performance across different tasks, including math problem-solving, coding and knowledge-based question answering. The models include Claude variants, GPT-4, GPT-3.5 and Gemini, with their respective scores in percentages. As you can see, the Claude 3 models perform robustly across a range of tasks, often outperforming other AI models presented in the comparison.

Claude's Haiku is the most affordable — yet effective — model that can be used for real-time response generation in customer service use cases, content moderation, etc. with higher efficiency.

You can easily access Claude models from Anthropic’s official website to see how it works for your queries. You can transcribe handwritten notes, understanding how objects are used and complex equations.

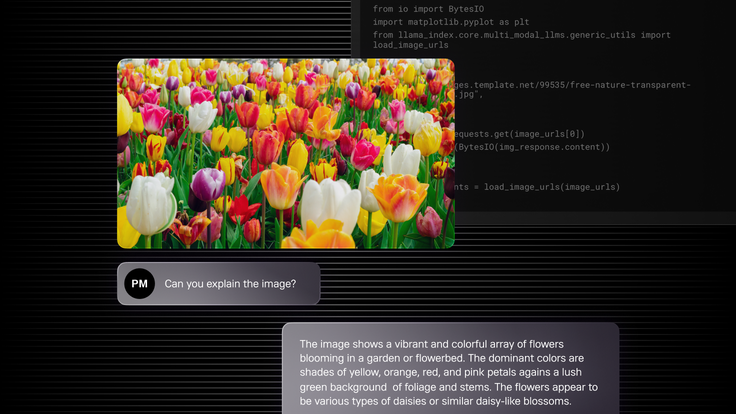

I tried an example by sharing an image I found online to see if the Claude model can respond accurately.

Amazing, that is an impressive response with all the minute details about the image shared.

Claude 3 multimodal tutorial

We will be using the SingleStore database as our vector store, and SingleStore Notebooks (just like Jupyter notebooks) to run all our code.

Also we will be using LlamaIndex, an AI framework to build LLM-powered applications. It provides a complete toolkit for developers building AI applications by providing them with different APIs and the ability to integrate data sources, various file formats, databases to store vector data, applications, etc.

We will be importing the libraries required, running the multimodal model from Anthropic [claude-3-haiku-20240307], storing the data in the SingleStore and retrieving it to see the power of multimodality through text and image.

Let’s explore the capabilities of the model on text and vision tasks. Get started by signing up for SingleStore. We are going to use SingleStore’s Notebook feature to run our commands, and SingleStore as the database to store our data.

Once you sign up for the first time, you need to create a workspace and a database to store your data. Later in this tutorial, you will also see how you can store data as embeddings.

Creating a database is easy. Just go to your workspace and click Create Database as shown here.

Go back to the main SingleStore dashboard. Click on Develop to create a Notebook.

Next, click on New Notebook > New Notebook.

Create a new Notebook and name it whatever you’d like.

Now, get started with the notebook code shared here. Make sure to select your workspace and database you created.

Start adding all the code shown below step-by-step in the notebook you just created.

Install LlamaIndex and other libraries if required.

1!pip install llama-index --quiet2!pip install llama-index-llms-anthropic --quiet3!pip install llama-index-multi-modal-llms-anthropic --quiet4!pip install llama-index-vector-stores-singlestoredb --quiet5!pip install matplotlib --quiet6 7from llama_index.llms.anthropic import Anthropic8from llama_index.multi_modal_llms.anthropic import AnthropicMultiModal

Set API keys. Add both OpenAI and Anthropic API keys.

1import os2os.environ["ANTHROPIC_API_KEY"] = ""3os.environ["OPENAI_API_KEY"] = ""

Let’s use the Claude 3’s Haiku model for chat completion

1llm = Anthropic(model="claude-3-haiku-20240307")2response = llm.complete("SingleStore is ")3print(response)

1!wget 2'https://raw.githubusercontent.com/run-llama/llama_index/main/docs/docs/ex3amples/data/images/prometheus_paper_card.png' -O 4'prometheus_paper_card.png'5 6from PIL import Image7import matplotlib.pyplot as plt8 9img = Image.open("prometheus_paper_card.png")10plt.imshow(img)

Load the image

1from llama_index.core import SimpleDirectoryReader2 3# put your local directore here4image_documents = SimpleDirectoryReader(5 input_files=["prometheus_paper_card.png"]6).load_data()7 8# Initiated Anthropic MultiModal class9anthropic_mm_llm = AnthropicMultiModal(10 model="claude-3-haiku-20240307", max_tokens=30011)

Test the query on the image

1response = anthropic_mm_llm.complete(2 prompt="Describe the images as an alternative text",3 image_documents=image_documents,4)5 6print(response)

You can test many image examples

1from PIL import Image2import requests3from io import BytesIO4import matplotlib.pyplot as plt5from llama_index.core.multi_modal_llms.generic_utils import 6load_image_urls7 8image_urls = [9 10"https://images.template.net/99535/free-nature-transparent-background-117g9hh.jpg",12]13 14img_response = requests.get(image_urls[0])15img = Image.open(BytesIO(img_response.content))16plt.imshow(img)17 18image_url_documents = load_image_urls(image_urls)

Ask the model to describe the mentioned image

1response = anthropic_mm_llm.complete(2 prompt="Describe the images as an alternative text",3 image_documents=image_url_documents,4)5 6print(response)

Index into a vector store

In this section, we’ll show you how to use Claude 3 to build a RAG pipeline over image data. We first use Claude to extract text from a set of images, then index the text with an embedding model. Finally, we build a query pipeline over the data.

1from llama_index.multi_modal_llms.anthropic import AnthropicMultiModal2anthropic_mm_llm = AnthropicMultiModal(max_tokens=300)3!wget 4"https://www.dropbox.com/scl/fi/c1ec6osn0r2ggnitijqhl/mixed_wiki_images_small.5zip?rlkey=swwxc7h4qtwlnhmby5fsnderd&dl=1" -O mixed_wiki_images_small.zip6!unzip mixed_wiki_images_small.zip7 8from llama_index.core import VectorStoreIndex, StorageContext9from llama_index.embeddings.openai import OpenAIEmbedding10from llama_index.llms.anthropic import Anthropic11from llama_index.vector_stores.singlestoredb import SingleStoreVectorStore12from llama_index.core import Settings13from llama_index.core import StorageContext14import singlestoredb15 16# Create a SingleStoreDB vector store17os.environ["SINGLESTOREDB_URL"] =18f'{connection_user}:{connection_password}@{connection_host}:{connection_port}/19{connection_default_database}'20 21vector_store = SingleStoreVectorStore()22 23# Using the embedding model to Gemini24embed_model = OpenAIEmbedding()25anthropic_mm_llm = AnthropicMultiModal(max_tokens=300)26 27storage_context = StorageContext.from_defaults(vector_store=vector_store)28 29index = VectorStoreIndex(30 nodes=nodes,31 storage_context=storage_context,32)

Testing the query

1from llama_index.llms.anthropic import Anthropic2 3query_engine = index.as_query_engine(llm=Anthropic())4response = query_engine.query("Tell me more about the porsche")5 6print(str(response))

The complete notebook code is here for your reference.

Multimodal LLMs: Industry specific use cases

There are many use cases where multimodal plays an important role and enhances LLM applications — here are the most top of mind:

- Healthcare. Since multimodal models can process various input types, these can be highly effective in the healthcare industry. The models can take prescriptions, procedures, X-rays and other imagery as input, combining both the text and image to come up with high quality responses and remedies.

- Customer support chatbots. Multimodal models can be highly effective as sometimes text might not be sufficient in resolving an issue, so companies can ask for screenshots and images. This way, using a multimodal-powered application can enhance answering capabilities.

- Social media sentiment analysis. This can be cleverly done by multimodal-powered applications since they can identify images and text as well.

- eCommerce. Product search and recommendations can be simplified using multimodal models, since they get more contextual information from the user with many input capabilities.

Multimodal models are revolutionizing the AI industry, and we will only see more powerful models in the future. While unimodal models have the limitations of processing only one type of input (mostly text), multimodal models gain an added advantage here by processing various input types (text, image, audio, video, etc). Multimodal models are paving the future for building LLM-powered applications for more context and high performance.

Start your free SingleStore trial today.